Today's links

- AI "journalists" prove that media bosses don't give a shit: In case there was ever any doubt.

- Hey look at this: Delights to delectate.

- Object permanence: Eggflation x excuseflation; Haunted Mansion stretch portraits; "Lost Souls"; Time Magazine x the first Worldcon; Obama v Freedom of Information Act; Ragequitting jihadi doxxes ISIS; OSI v DRM in standards.

- Upcoming appearances: Where to find me.

- Recent appearances: Where I've been.

- Latest books: You keep readin' em, I'll keep writin' 'em.

- Upcoming books: Like I said, I'll keep writin' 'em.

- Colophon: All the rest.

AI "journalists" prove that media bosses don't give a shit (permalink)

Ed Zitron's a fantastic journalist, capable of turning a close read of AI companies' balance-sheets into an incandescent, exquisitely informed, eye-wateringly profane rant:

https://www.wheresyoured.at/the-ai-bubble-is-an-information-war/

That's "Ed, the financial sleuth." But Ed has another persona, one we don't get nearly enough of, which I delight in: "Ed the stunt journalist." For example, in 2024, Ed bought Amazon's bestselling laptop, "a $238 Acer Aspire 1 with a four-year-old Celeron N4500 Processor, 4GB of DDR4 RAM, and 128GB of slow eMMC storage" and wrote about the experience of using the internet with this popular, terrible machine:

https://www.wheresyoured.at/never-forgive-them/

It sucked, of course, but it sucked in a way that the median tech-informed web user has never experienced. Not only was this machine dramatically underpowered, but its defaults were set to accept all manner of CPU-consuming, screen-filling ad garbage and bloatware. If you or I had this machine, we would immediately hunt down all those settings and nuke them from orbit, but the kind of person who buys a $238 Acer Aspire from Amazon is unlikely to know how to do any of that and will suffer through it every day, forever.

Normally the "digital divide" refers to access to technology, but as access becomes less and less of an issue, the real divide is between people who know how to defend themselves from the cruel indifference of technology designers and people who are helpless before their enshittificatory gambits.

Zitron's stunt stuck with me because it's so simple and so apt. Every tech designer should be forced to use a stock configuration Acer Aspire 1 for a minimum of three hours/day, just as every aviation CEO should be required to fly basic coach at least one out of three flights (and one of two long-haul flights).

To that, I will add: every news executive should be forced to consume the news in a stock browser with no adblock, no accessibility plugins, no Reader View, none of the add-ons that make reading the web bearable:

https://pluralistic.net/2026/03/07/reader-mode/#personal-disenshittification

But in all honesty, I fear this would not make much of a difference, because I suspect that the people who oversee the design of modern news sites don't care about the news at all. They don't read the news, they don't consume the news. They hate the news. They view the news as a necessary evil within a wider gambit to deploy adware, malware, pop-ups, and auto-play video.

Rawdogging a Yahoo News article means fighting through a forest of pop-ups, pop-unders, autoplay video, interrupters, consent screens, modal dialogs, modeless dialogs – a blizzard of news-obscuring crapware that oozes contempt for the material it befogs. Irrespective of the words and icons displayed in these DOM objects, they all carry the same message: "The news on this page does not matter."

The owners of news services view the news as a necessary evil. They aren't a news organization: they are an annoying pop-up and cookie-setting factory with an inconvenient, vestigial news entity attached to it. News exists on sufferance, and if it was possible to do away with it altogether, the owners would.

That turns out to be the defining characteristic of work that is turned over to AI. Think of the rapid replacement of customer service call centers with AI. Long before companies shifted their customer service to AI chatbots, they shifted the work to overseas call centers where workers were prohibited from diverging from a script that made it all but impossible to resolve your problems:

https://pluralistic.net/2025/08/06/unmerchantable-substitute-goods/#customer-disservice

These companies didn't want to do customer service in the first place, so they sent the work to India. Then, once it became possible to replace Indian call center workers who weren't allowed to solve your problems with chatbots that couldn't resolve your problems, they fired the Indian call center workers and replaced them with chatbots. Ironically, many of these chatbots turn out to be call center workers pretending to be chatbots (as the Indian tech joke goes, "AI stands for 'Absent Indians'"):

https://pluralistic.net/2024/01/29/pay-no-attention/#to-the-little-man-behind-the-curtain

"We used an AI to do this" is increasingly a way of saying, "We didn't want to do this in the first place and we don't care if it's done well." That's why DOGE replaced the call center reps at US Customs and Immigration with a chatbot that tells you to read a PDF and then disconnects the call:

https://pluralistic.net/2026/02/06/doge-ball/#n-600

The Trump administration doesn't want to hear from immigrants who are trying to file their bewildering paperwork correctly. Incorrect immigration paperwork is a feature, not a bug, since it can be refined into a pretext to kidnap someone, imprison them in a gulag long enough to line the pockets of a Beltway Bandit with a no-bid contract to operate an onshore black site, and then deport them to a country they have no connection with, generating a fat payout for another Beltway Bandit with the no-bid contract to fly kidnapped migrants to distant hellholes.

If the purpose of a customer service department is to tell people to go fuck themselves, then a chatbot is obviously the most efficient way of delivering the service. It's not just that a chatbot charges less to tell people to go fuck themselves than a human being – the chatbot itself means "go fuck yourself." A chatbot is basically a "go fuck yourself" emoji. Perhaps this is why every AI icon looks like a butthole:

https://velvetshark.com/ai-company-logos-that-look-like-buttholes

So it's no surprise that media bosses are so enthusiastic about replacing writers with chatbots. They hate the news and want it to go away. Outsourcing the writing to AI is just another way of devaluing it, adjacent to the existing enshittification that sees the news buried in popups, autoplays, consent dialogs, interrupters and the eleventy-million horrors that a stock browser with default settings will shove into your eyeballs on behalf of any webpage that demands them:

https://pluralistic.net/2024/05/07/treacherous-computing/#rewilding-the-internet

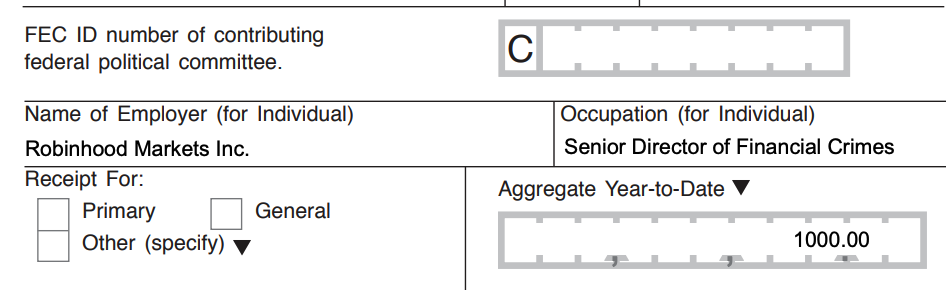

Remember that summer reading list that Hearst distributed to newspapers around the country, which turned out to be stuffed with "hallucinated" titles? At first, the internet delighted in dunking on Marco Buscaglia, the writer whose byline the list ran under. But as 404 Media's Jason Koebler unearthed, Buscaglia had been set up to fail, tasked with writing most of a 64-page insert that would have normally been the work of dozens of writers, editors and fact checkers, all on his own:

When Hearst hires one freelancer to do the work of dozens, they are saying, "We do not give a shit about the quality of this work." It is literally impossible for any writer to produce something good under those conditions. The purpose of Hearst's syndicated summer guide was to bulk out the newspapers that had been stripmined by their corporate owners, slimmed down to a handful of pages that are mostly ads and wire-service copy. The mere fact that this supplement was handed to a single freelancer blares "Go fuck yourself" long before you clap eyes on the actual words printed on the pages.

The capital class is in the grips of a bizarre form of AI psychosis: the fantasy of a world without people, where any fool idea that pops into a boss's head can be turned into a product without having to negotiate its creation with skilled workers who might point out that your idea is pretty fucking stupid:

https://pluralistic.net/2026/01/05/fisher-price-steering-wheel/#billionaire-solipsism

For these AI boosters, the point isn't to create an AI that can do the work as well as a person – it's to condition the world to accept the lower-quality work that will come from a chatbot. Rather than reading a summer reading list of actual books, perhaps you could be satisfied with a summer reading list of hallucinated books that are at least statistically probable book-shaped imaginaries?

The bosses dreaming up use-cases for AI start from a posture of profound and proud ignorance of how workers who do useful things operate. They ask themselves, "If I was a ______, how would I do the job?" and then they ask an AI to do that, and declare the job done. They produce utility-shaped statistical artifacts, not utilities.

Take Grammarly, a company that offers statistical inferences about likely errors in your text. Grammar checkers aren't a terrible idea on their face, and I've heard from many people who struggle to express themselves in writing (either because of their communications style, or because they don't speak English as a first language) for whom apps like Grammarly are useful.

But Grammarly has just rolled out an AI tool that is so obviously contemptuous of writing that they might as well have called it "Go fuck yourself, by Grammarly." The new product is called "Expert Review," and it promises to give you writing advice "inspired" by writers whose writing they have ingested. I am one of these virtual "writing teachers" you can pay Grammarly for:

https://www.theverge.com/ai-artificial-intelligence/890921/grammarly-ai-expert-reviews

This is not how writing advice works. When I teach the Clarion Science Fiction and Fantasy Writers' workshop, my job isn't to train the students to produce work that is strongly statistically correlated with the sentence structure and word choices in my own writing. My job – the job of any writing teacher – is to try and understand the student's writing style and artistic intent, and to provide advice for developing that style to express that intent.

What Grammarly is offering isn't writing advice, it's stylometry, a computational linguistics technique for evaluating the likelihood that two candidate texts were written by the same person. Stylometry is a very cool discipline (as is adversarial stylometry, a set of techniques to obscure the authorship of a text):

https://en.wikipedia.org/wiki/Stylometry

But stylometry has nothing to do with teaching someone how to write. Even if you want to write a pastiche in the style of some writer you admire (or want to send up), word choices and sentence structure are only incidental to capturing that writer's style. To reduce "style" to "stylometry" is to commit the cardinal sin of technical analysis: namely, incinerating all the squishy qualitative aspects that can't be readily fed into a model and doing math on the resulting dubious quantitative residue:

https://locusmag.com/feature/cory-doctorow-qualia/

If you wanted to teach a chatbot to teach writing like a writer, you would – at a minimum – have to train that chatbot on the instruction that writer gives, not the material that writer has published. Nor can you infer how a writer would speak to a student by producing a statistical model of the finished work that writer has published. "Published work" has only an incidental relationship to "pedagogical communication."

Critics of Grammarly are mostly focused on the effrontery of using writers' names without their permission. But I'm not bothered by that, honestly. So long as no one is being tricked into thinking that I endorsed a product or service, you don't need my permission to say that I inspired it (even if I think it's shit).

What I find absolutely offensive about Grammarly is not that they took my name in vain, but rather, that they reduced the complex, important business of teaching writing to a statistical exercise in nudging your work into a word frequency distribution that hews closely to the average of some writer's published corpus. This is Grammarly's fraud: not telling people that they're being "taught by Cory Doctorow," but rather, telling people that they are being "taught" anything.

Reducing "teaching writing" to "statistical comparisons with another writer's published work" is another way of saying "go fuck yourself" – not to the writers whose identities that Grammarly has hijacked, but to the customers they are tricking into using this terrible, substandard, damaging product.

Preying on aspiring writers is a grift as old as the publishing industry. The world is full of dirtbag "story doctors," vanity presses, fake literary agents and other flimflam artists who exploit people's natural desire to be understood to steal from them:

Grammarly is yet another company for whom "AI" is just a way to lower quality in the hopes of lowering expectations. For Grammarly, helping writers with their prose is an irritating adjunct to the company's main business of separating marks from their money.

In business theory, the perfect firm is one that charges infinity for its products and pays zero for its inputs (you know, "scholarly publishing"). For bosses, AI is a way to shift their firm towards this ideal.

In this regard, AI is connected to the long tradition of capitalist innovation, in which new production efficiencies are used to increase quantity at the expense of quality. This has been true since the Luddite uprising, in which skilled technical workers who cared deeply about the textiles they produced using complex machines railed against a new kind of machine that produced manifestly lower quality fabric in much higher volumes:

https://pluralistic.net/2023/09/26/enochs-hammer/#thats-fronkonsteen

It's not hard to find credible, skilled people who have stories about using AI to make their work better. Elsewhere, I've called these people "centaurs" – human beings who are assisted by machines. These people are embracing the socialist mode of automation: they are using automation to improve quality, not quantity.

Whenever you hear a skilled practitioner talk about how they are able to hand off a time-consuming, low-value, low-judgment task to a model so they can focus on the part that means the most to them, you are talking to a centaur. Of course, it's possible for skilled practitioners to produce bad work – some of my favorite writers have published some very bad books indeed – but that isn't a function of automation, that's just human fallibility.

A reverse centaur (a person conscripted to act as a peripheral to a machine) is trapped by the capitalist mode of automation: quantity over quality. Machines work faster and longer than humans, and the faster and harder a human can be made to work, the closer the firm can come to the ideal of paying zero for its inputs.

A reverse centaur works for a machine that is set to run at the absolute limit of its human peripheral's capability and endurance. A reverse centaur is expected to produce with the mechanical regularity of a machine, catching every mistake the machine makes. A reverse centaur is the machine's accountability sink and moral crumple-zone:

https://estsjournal.org/index.php/ests/article/view/260

AI is a normal technology, just another set of automation tools that have some uses for some users. The thing that makes AI signify "go fuck yourself" isn't some intrinsic factor of large language models or transformers. It's the capitalist mode of automation, increasing quantity at the expense of quality. Automation doesn't have to be a way to reduce expectations in the hopes of selling worse things for more money – but without some form of external constraint (unions, regulation, competition), that is inevitably how companies will wield any automation, including and especially AI.

Hey look at this (permalink)

- Assassination markets are legal now but Trump doesn’t have to worry https://protos.com/assassination-markets-are-legal-now-but-trump-doesnt-have-to-worry/

-

You Are Being Lied to About Algorithms https://www.usermag.co/p/you-are-being-lied-to-about-algorithms

-

States’ trial against Live Nation could move forward as soon as next week https://www.theverge.com/policy/892353/live-nation-ticketmaster-doj-states-settlement

-

Neuromancer / Count Zero / Mona Lisa Overdrive https://macintoshgarden.org/apps/neuromancer-count-zero-mona-lisa-overdrive

-

Judge Slams Secret DOJ-Live Nation Settlement Process as "Mind-boggling" https://www.bigtechontrial.com/p/judge-slams-secret-doj-live-nation

Object permanence (permalink)

#15yrsago History of the Disney Haunted Mansion’s stretching portraits https://longforgottenhauntedmansion.blogspot.com/2011/03/many-faces-ofthe-other-stretching.html

#15yrsago Readers Against DRM (logo) https://web.archive.org/web/20110311213843/https://readersbillofrights.info/RAD

#15yrsago Lost Souls: Audio adaptation of a classic vampire novel https://memex.craphound.com/2011/03/10/lost-souls-audio-adaptation-of-a-classic-vampire-novel/

#15yrsago Time‘s appraisal of the first WorldCon https://web.archive.org/web/20080906184034/https://time.com/time/magazine/article/0,9171,761661-1,00.html

#15yrsago Insipid thrift-store landscapes improved with monsters https://imgur.com/involuntary-collaborations-i-buy-other-peoples-landscape-paintings-yard-sales-goodwill-put-monsters-them-r-pics-2780-march-11-2011-Oujbl

#15yrsago Fight 8-track piracy with this 1976 record sleeve https://www.flickr.com/photos/supraterra/5516574440/in/pool-41894168726@N01

#15yrsago Michigan Republicans create “financial martial law”; appointees to replace elected local officials https://web.archive.org/web/20120409124750/http://www.dailytribune.com/articles/2011/03/10/news/doc4d78d0d4d764d009636769.txt

#10yrsago Lawsuit reveals Obama’s DoJ sabotaged Freedom of Information Act transparency https://web.archive.org/web/20160309183758/https://news.vice.com/article/it-took-a-foia-lawsuit-to-uncover-how-the-obama-administration-killed-foia-reform

#10yrsago If the FBI can force decryption backdoors, why not backdoors to turn on your phone’s camera? https://www.theguardian.com/technology/2016/mar/10/apple-fbi-could-force-us-to-turn-on-iphone-cameras-microphones

#10yrsago Disgruntled IS defector dumps full details of tens of thousands of jihadis https://web.archive.org/web/20160330061315/https://news.sky.com/story/1656777/is-documents-identify-thousands-of-jihadis

#10yrsago Using distributed code-signatures to make it much harder to order secret backdoors https://arstechnica.com/information-technology/2016/03/cothority-to-apple-lets-make-secret-backdoors-impossible/

#10yrsago Open Source Initiative says standards aren’t open unless they protect security researchers and interoperability https://web.archive.org/web/20190822053758/https://www.eff.org/deeplinks/2016/03/-are-only-open-if-they-protect-security-and-interoperability

#1yrago Eggflation is excuseflation https://pluralistic.net/2025/03/10/demand-and-supply/#keep-cal-maine-and-carry-on

Upcoming appearances (permalink)

- Barcelona: Enshittification with Simona Levi/Xnet (Llibreria Finestres), Mar 20

https://www.llibreriafinestres.com/evento/cory-doctorow/ -

Berkeley: Bioneers keynote, Mar 27

https://conference.bioneers.org/ -

Montreal: Bronfman Lecture (McGill) Apr 10

https://www.eventbrite.ca/e/artificial-intelligence-the-ultimate-disrupter-tickets-1982706623885 -

London: Resisting Big Tech Empires (LSBU)

https://www.tickettailor.com/events/globaljusticenow/2042691 -

Berlin: Re:publica, May 18-20

https://re-publica.com/de/news/rp26-sprecher-cory-doctorow -

Berlin: Enshittification at Otherland Books, May 19

https://www.otherland-berlin.de/de/event-details/cory-doctorow.html -

Hay-on-Wye: HowTheLightGetsIn, May 22-25

https://howthelightgetsin.org/festivals/hay/big-ideas-2

Recent appearances (permalink)

- Launch for Cindy's Cohn's "Privacy's Defender" (City Lights)

https://www.youtube.com/watch?v=WuVCm2PUalU -

Chicken Mating Harnesses (This Week in Tech)

https://twit.tv/shows/this-week-in-tech/episodes/1074 -

The Virtual Jewel Box (U Utah)

https://tanner.utah.edu/podcast/enshittification-cory-doctorow-matthew-potolsky/ -

Tanner Humanities Lecture (U Utah)

https://www.youtube.com/watch?v=i6Yf1nSyekI -

The Lost Cause

https://streets.mn/2026/03/02/book-club-the-lost-cause/

Latest books (permalink)

- "Canny Valley": A limited edition collection of the collages I create for Pluralistic, self-published, September 2025 https://pluralistic.net/2025/09/04/illustrious/#chairman-bruce

-

"Enshittification: Why Everything Suddenly Got Worse and What to Do About It," Farrar, Straus, Giroux, October 7 2025

https://us.macmillan.com/books/9780374619329/enshittification/ -

"Picks and Shovels": a sequel to "Red Team Blues," about the heroic era of the PC, Tor Books (US), Head of Zeus (UK), February 2025 (https://us.macmillan.com/books/9781250865908/picksandshovels).

-

"The Bezzle": a sequel to "Red Team Blues," about prison-tech and other grifts, Tor Books (US), Head of Zeus (UK), February 2024 (thebezzle.org).

-

"The Lost Cause:" a solarpunk novel of hope in the climate emergency, Tor Books (US), Head of Zeus (UK), November 2023 (http://lost-cause.org).

-

"The Internet Con": A nonfiction book about interoperability and Big Tech (Verso) September 2023 (http://seizethemeansofcomputation.org). Signed copies at Book Soup (https://www.booksoup.com/book/9781804291245).

-

"Red Team Blues": "A grabby, compulsive thriller that will leave you knowing more about how the world works than you did before." Tor Books http://redteamblues.com.

-

"Chokepoint Capitalism: How to Beat Big Tech, Tame Big Content, and Get Artists Paid, with Rebecca Giblin", on how to unrig the markets for creative labor, Beacon Press/Scribe 2022 https://chokepointcapitalism.com

Upcoming books (permalink)

- "The Reverse-Centaur's Guide to AI," a short book about being a better AI critic, Farrar, Straus and Giroux, June 2026

-

"Enshittification, Why Everything Suddenly Got Worse and What to Do About It" (the graphic novel), Firstsecond, 2026

-

"The Post-American Internet," a geopolitical sequel of sorts to Enshittification, Farrar, Straus and Giroux, 2027

-

"Unauthorized Bread": a middle-grades graphic novel adapted from my novella about refugees, toasters and DRM, FirstSecond, 2027

-

"The Memex Method," Farrar, Straus, Giroux, 2027

Colophon (permalink)

Today's top sources:

Currently writing: "The Post-American Internet," a sequel to "Enshittification," about the better world the rest of us get to have now that Trump has torched America (1031 words today, 47410 total)

- "The Reverse Centaur's Guide to AI," a short book for Farrar, Straus and Giroux about being an effective AI critic. LEGAL REVIEW AND COPYEDIT COMPLETE.

-

"The Post-American Internet," a short book about internet policy in the age of Trumpism. PLANNING.

-

A Little Brother short story about DIY insulin PLANNING

This work – excluding any serialized fiction – is licensed under a Creative Commons Attribution 4.0 license. That means you can use it any way you like, including commercially, provided that you attribute it to me, Cory Doctorow, and include a link to pluralistic.net.

https://creativecommons.org/licenses/by/4.0/

Quotations and images are not included in this license; they are included either under a limitation or exception to copyright, or on the basis of a separate license. Please exercise caution.

How to get Pluralistic:

Blog (no ads, tracking, or data-collection):

Newsletter (no ads, tracking, or data-collection):

https://pluralistic.net/plura-list

Mastodon (no ads, tracking, or data-collection):

Bluesky (no ads, possible tracking and data-collection):

https://bsky.app/profile/doctorow.pluralistic.net

Medium (no ads, paywalled):

https://doctorow.medium.com/

https://twitter.com/doctorow

Tumblr (mass-scale, unrestricted, third-party surveillance and advertising):

https://mostlysignssomeportents.tumblr.com/tagged/pluralistic

"When life gives you SARS, you make sarsaparilla" -Joey "Accordion Guy" DeVilla

READ CAREFULLY: By reading this, you agree, on behalf of your employer, to release me from all obligations and waivers arising from any and all NON-NEGOTIATED agreements, licenses, terms-of-service, shrinkwrap, clickwrap, browsewrap, confidentiality, non-disclosure, non-compete and acceptable use policies ("BOGUS AGREEMENTS") that I have entered into with your employer, its partners, licensors, agents and assigns, in perpetuity, without prejudice to my ongoing rights and privileges. You further represent that you have the authority to release me from any BOGUS AGREEMENTS on behalf of your employer.

ISSN: 3066-764X

![Tweet screenshot: SunDog @SUNDOG_TRX You never truly know who’s pulling the strings… 🤫 [AI-generated image of a corgi dog with a collar depicting the Tron logo, paws raised above the White House, with strings attached to the paws like a marionette] 2:30 AM Jul 24, 2025](https://storage.mollywhite.net/micro/0add1a4647959f313bac_Screenshot-2025-08-13-at-12.00.02---AM.png)